From Constant Fires to Confident Releases: How We Turned Around a Caribbean E-Commerce App

When this client first reached out to us, they were not looking for a QA agency in the traditional sense. They had already tried that route. What they needed — though they did not quite have the words for it yet — was someone to own their quality problem completely. Not consult on it. Not report on it. Own it.

They are a growing e-commerce business serving customers across the Caribbean. Their app is not a nice-to-have — it is their primary sales channel. When it works well, they grow. When it does not, they lose orders, lose trust, and lose ground to competitors who are always one tap away on the same phone screen.

By the time we spoke, their production incident count had become a weekly ritual. Every release introduced something new. Sometimes it was a checkout flow breaking on specific device types. Sometimes it was payment confirmations not arriving. Sometimes it was the app becoming unusable under the kind of traffic spike that happens during a promotion — exactly when you need it most.

Their development vendor was working hard. Nobody was being lazy. But effort without a quality system is like bailing water without plugging the hole.

"Every sprint, we were fixing last release while trying to build the next one. New features kept getting pushed. The team was exhausted and the product felt like it was going backwards."

— Client's Product Lead, first briefing callWhat the situation actually looked like

Before proposing anything, we spent time understanding the problem properly. Too many QA engagements start with a solution — a tool, a framework, a test plan — before anyone has clearly defined what is actually broken and why.

What we found was a classic stability debt spiral. The development team was under pressure to deliver features. With no structured QA gate, features were being shipped with optimistic testing — the happy path worked, so it went out. Edge cases, device-specific behaviour, performance under load, and third-party integration failures were all being discovered by real users in production instead of by testers in staging.

Each production incident created emergency fixes. Emergency fixes meant unplanned work. Unplanned work meant planned features slipped. Slipped features meant more pressure to ship faster next sprint. And faster shipping with no QA gate meant more production incidents. The wheel kept turning.

The four core problems we diagnosed:

Testing was ad hoc and release-dependent. There was no structured suite covering critical user journeys, regression scenarios, or edge cases specific to the Caribbean market — including network variability, local payment methods, and device distribution.

Payment failures were among the most frequent production complaints, yet the payment gateway integration had never been tested under realistic failure conditions — declined cards, session timeouts, network drops mid-transaction, or currency handling edge cases.

Nobody knew how the app behaved under load. Promotions and flash sales were driving traffic spikes that the infrastructure had never been tested against. Performance degradation was only discovered when real customers were already experiencing it.

Releases were shipped when the dev team said they were ready. There was no independent quality gate, no release readiness criteria, and no one accountable for the production health of what went live.

How we approached it — and in what order

First: Take ownership, not just tickets

The first thing we did was establish ourselves as the accountable quality partner — not a service desk that processes test cases. We embedded into their team communication, attended sprint planning, reviewed upcoming feature scopes, and made it our job to understand the business context behind every release. Quality decisions cannot be made in a vacuum. You need to know what a payment failure costs this business on a Wednesday evening versus a Saturday afternoon promotion.

Building a test coverage baseline that actually reflected their users

We built a structured test suite from scratch — covering the full customer journey from product discovery through to post-purchase. Critically, we built it around the reality of their user base: the device types most common in the Caribbean market, the network conditions their customers actually experience, the local payment methods in use, and the specific flows where previous incidents had clustered.

This is the part most QA processes miss. Generic test cases catch generic bugs. If your users are predominantly on mid-range Android devices with variable mobile data connections making payments via regional gateways, your test suite needs to reflect that — not assume a perfectly connected iPhone with a Visa card.

"Generic test cases catch generic bugs. If your users are on mid-range Android devices with variable mobile data, making payments via regional gateways — your test suite needs to reflect exactly that."

Hunting down the payment gateway issues

Payment failures were the most damaging problem — both financially and in terms of customer trust. We ran a dedicated investigation phase in the sandbox environment, systematically testing every failure scenario the gateway documentation described plus the ones it did not.

What we found was a combination of issues: race conditions in the transaction confirmation flow that caused duplicate order states under poor network conditions, incorrect error handling for specific decline codes that left users on a blank screen instead of a clear error message, and a session timeout edge case that was silently failing on the client side without notifying the server — meaning orders appeared complete to users but were never processed.

Each issue came with a fully documented reproduction path, environment details, and a severity assessment. Not "payment sometimes fails." Exactly when, exactly why, and exactly what the user experiences when it does. That level of specificity is what allows a development team to fix something quickly and confidently.

Load and stress testing — finding the ceiling before customers do

Performance testing is one of those things that always gets deprioritised until something explodes. This client had a promotion coming up — their biggest sales event of the year. We ran load tests simulating realistic concurrency levels based on their traffic data, then pushed into stress territory to find where the system degraded and where it broke.

The results were uncomfortable reading — but far better to read them in a test report than in a customer complaint thread. We identified three specific bottlenecks: a database query in the product listing API that degraded non-linearly above 200 concurrent users, a session management service that started dropping connections under sustained load, and a third-party image delivery service that had no fallback behaviour when it timed out, causing entire page renders to hang.

The dev team fixed two of the three before the promotion. The third was mitigated with a targeted infrastructure adjustment. The promotion ran without a single performance-related incident.

What changed in the team dynamic

This is the part of the story that we find most meaningful — because it was not planned. It happened organically as trust was built through consistent delivery.

Within a few months of the engagement, the client started looping us into conversations that had nothing to do with test execution. Design reviews for new features. Technology decisions — should we switch payment providers? Is this third-party SDK reliable? Architecture discussions about a planned microservices migration.

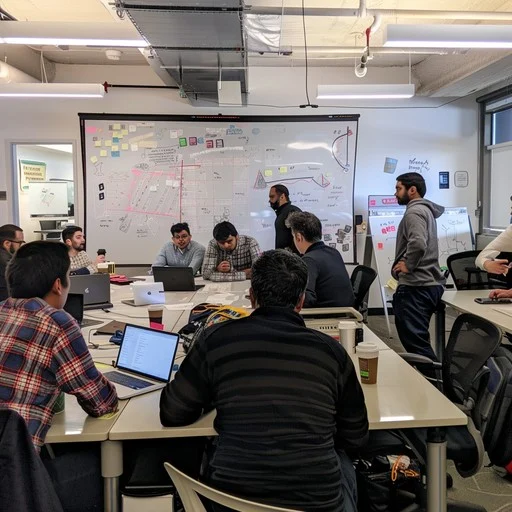

We started leading their scrum ceremonies. Not because we pushed for it, but because having someone in the room who understood both the technical quality implications and the business impact of every decision turned out to be genuinely useful — and it showed.

Today, our role with this client goes well beyond what most people think of when they think of a QA agency. We are involved from the moment a new feature is a sketch on a whiteboard. We shape how it is designed so it can be tested. We flag technical risks before a line of code is written. We own the release gate. And when something does go wrong — because occasionally things still do — we are the first call, not the last resort.

"They are not just a QA vendor anymore. They are part of how we make decisions. I cannot imagine running a release without them in the loop."

— Client's CTO, six months into the engagementWhat we would tell any e-commerce team reading this

If your release process feels like a gamble — if your team dreads Mondays after a Friday deployment, if your payment flow has "quirks" you have learned to live with, if performance testing is something you plan to do "when things settle down" — the time to fix that is not after the next big incident. It is before it.

Stability is not the absence of bugs. It is a system — of coverage, of process, of ownership — that catches bugs before they reach your customers. That system does not build itself, and it is very hard to build it while simultaneously trying to ship features under pressure.

That is exactly the situation we are built for.

Is your product in a similar place?

Whether you're dealing with recurring production incidents, payment issues, performance unknowns, or a QA process that isn't keeping up — we'll take a clear-eyed look at your setup and tell you exactly what we see. No fluff, no sales pitch.

Let's Talk About Your Product →